April 2022 Update

An overview of our updated, events, and publications for this month, as well as our regular weekly review of articles on terrorist and violent extremist use of the internet, counterterrorism, digital rights, and tech policy.

Job Alert!

- We are looking for a Communications Associate to shape and deliver our communications strategy for media and key stakeholders. This is suited for early career candidates with excellent comms skills and an interest in counterterrorism and tech policy. Find out more and apply here.

Webinar Alert!

- Join us on 28 April at 5PM BST for our next TAT-GIFCT webinar, “Audio Content & Detection: Moderation Challenges and Opportunities with Existing Audio Detection Models”. More information about the speakers and the agenda will be shared soon. You can register here!

Upcoming Events

- We are pleased to announce that we will be organising a panel at the 2022 Terrorism and Social Media Conference (TASM) organised by the Cyber Threats Research Centre (CYTREC). At the Conference, we will be presenting new reports from our OSINT, Research and Policy teams. Check out the full TASM schedule here!

- We are pleased to say that Tech Against Terrorism will be present at RightsCon 2022, where we will organise a private meeting on “Terrorist Use of the Internet: creating a digital-rights safeguarding response to terrorist operated websites”. This meeting will be based on our landmark report on The Threat of Terrorist and Violent Extremist Operated Websites and will aim to inform the development of guiding principles to tackle this pressing issue.

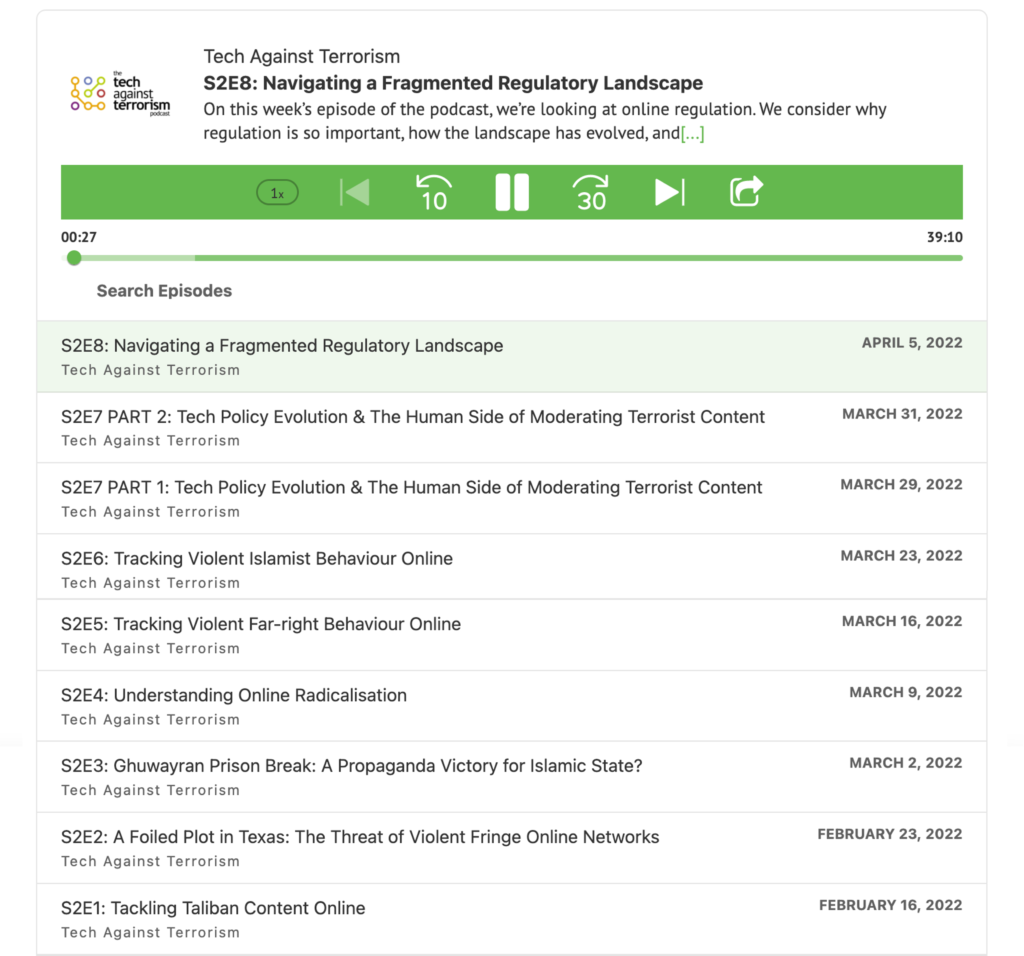

The Tech Against Terrorism Podcast

- Tune in to Episode 8: “Navigating a Fragmented Regulatory Landscape” here, or wherever you get your podcasts. Our host, Anne Craanen, is joined by Luca Bertuzzi, Asha Allen, and Jacob Berntsson to discuss why regulation is so important and yet so complicated to get right. They also consider how the regulatory landscape has evolved, and focus on some of the specific challenges around regulating terrorist content online.

- You can now find all Tech Against Terrorism podcast episodes on our Knowledge Sharing Platform.

Knowledge Sharing Platform

- The Knowledge Sharing Platform (KSP) is seeking feedback from its users on the KSP’s resources and features. This feedback form aims to understand your current experience of the Knowledge Sharing Platform, in terms of the resources, and features, and how these can be improved or shaped going forward. You can access the form here.

ICYMI

An overview of our events, publications, and media coverage from the past month

Events

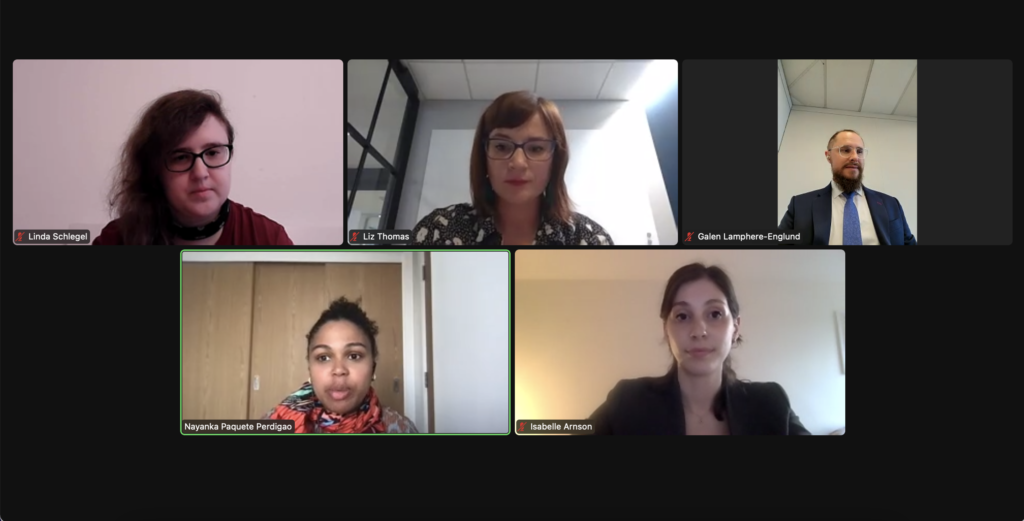

- Thank you for joining us for our second webinar of the 2022 Tech Against Terrorism & GIFCT e-learning series, discussing “The Gamification of Extremism: Extremist Use of Gaming Platforms”.

Agenda

- Linda Schlegel, Expert Researcher in Online Radicalisation and Extremism, Goethe University

- Galen Lamphere-Englund, Senior Adviser, Preventing and Countering Violent Extremism, Love Frankie

- Liz Thomas, Director of Public Policy, Digital Safety, Microsoft

- Moderators: Isabelle Arnson, Policy Analyst, Tech Against Terrorism

Dr. Nayanka Paquete Perdigão, Program Associate, GIFCT

- This month we also hosted a webinar to launch our landmark report, "The Threat of Terrorist and Violent Extremist Operated Websites". The report found that global terrorist and violent extremist actors are running at least 198 websites on the surface web and provides an in-depth analysis of the most prominent websites.

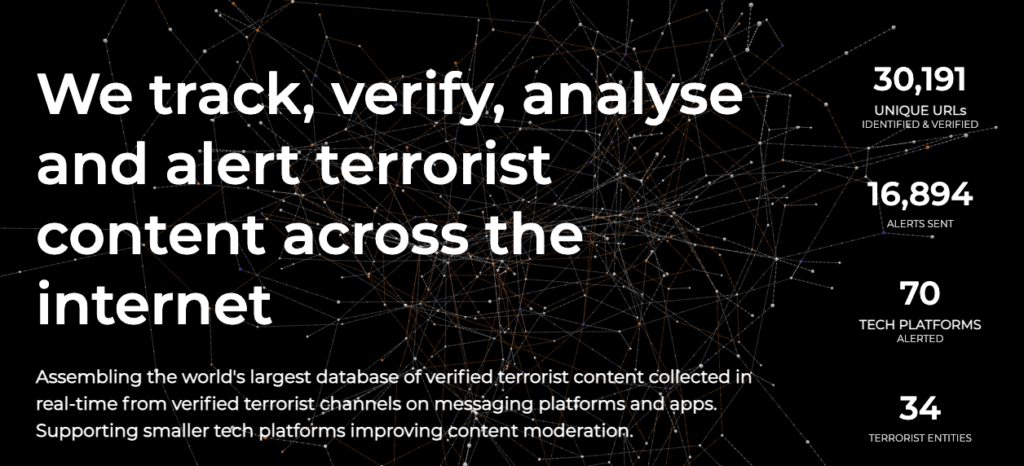

- Thank you to everyone who attended our for joining our Terrorist Content Analytics Platform (TCAP) March Office Hours. In this session, we had a special launch event for our TCAP Transparency Report, which gave more information about some key metrics and an update on how we are developing the TCAP through a transparency-by-design approach.

- For those who missed any of our events, webinars or office hours sessions and would like to access the recordings, please get in touch with us at contact@techagainstterrorism.org.

Publications

- This month, we published our first TCAP Transparency Report. The report analyses some key metrics of the TCAP throughout the first full year of TCAP alerts to uphold our transparency-by-design approach. This report also provides crucial insight on how terrorists use online platforms and details the impact of the TCAP has in disrupting it.

- Following the Transparency Report, we also published a blog post of a comparative analysis of our statistics on content collection and removal rates, comparing data of Islamist and far-right terrorist groups. You can read the blog post here.

- On the 16 February, Tech Against Terrorism hosted the first of a three-part roundtable series discussing the threat of terrorist use of end-to-end encrypted (E2EE) services and how to counter it, with counterterrorism experts, digital rights advocates, and government and tech sector representatives. This month, we published our summary of the roundtable.

- Senior Research Analyst, Anne Craanen, and Junior OSINT Analyst, Charley Gleeson, were published in the recent Radicalisation Awareness Network Spotlight on Digital Ecosystems. Their piece focuses on the overlap between terrorist content, disinformation, and the tech sector response. This article was also republished by VoxPol.

Media Coverage

- Our Executive Director, Adam Hadley, spoke to Der Spiegel about our investigations into the Wagner Group and its links to neo-Nazi movements. You can read the article in German here.

- Tech Against Terrorism’s investigation on Russian-backed forces in Ukraine and their links to neo-Nazi groups has been quoted in The Guardian. The article points to the presence of neo-Nazi presence in Russian-backed mercenaries in Ukraine, and how these findings offer a stark contraction to Russia narrative of fascists running wild in Ukraine to justify the conflict.

Speaking Events

- Tech Against Terrorism's Executive Director, Adam Hadley, presented at the European Union Internet Forum's (EUIF) Workshop on Terrorist Operated Websites, detailing our recent report on the threat of terrorist and violent extremist operated websites. You can read our full research report here.

- Tech Against Terrorism’s Senior Research Analyst, Anne Craanen, featured on the Behind the Spine podcast, discussing radicalisation and the work of Tech Against Terrorism and the Terrorist Content Analytics Platform in countering terrorist use of the internet.

- Anne Craanen presented at the 5th StratCom Seminar of the Club of Venice on hybrid threats and the role of the Terrorist Content Analytics Platform in countering terrorist use of the internet.

- Tech Against Terrorism’s Head of Policy and Research, Jacob Berntsson, presented at the George Washington University Program on Extremism’s webinar, “The State of Online Terrorist and Extremist Content Removal Policy in the United States.” You can watch a recording here.

Reader's Digest – 8 April 2022

Our regular weekly review of articles on terrorist and violent extremist use of the internet, counterterrorism, digital rights, and tech policy.

Top Stories

- The Economic Community of West African States has ordered the government of Nigeria to amend its cybercrime law, stating that the law unfairly targets journalists and political dissidents, and goes against the African Commission on Human and Peoples’ Rights.

You can read more about online regulation in Nigeria in our Online Regulation Series 2.0 blog post.

- The US Department of State has established the Bureau of Cyberspace and Digital Policy. The Bureau will seek to address security challenges posed by cyberspace, digital technologies, and digital policy.

- JustPaste.It has published their Transparency Report for 2021. The report details JustPaste.it’s enforcement of its Terms of Services, including following reports of violating content from government and non-governments, and states that over 98% of government abuse reports were related to terrorist material.

- Pinterest has announced a new misinformation policy to counter false and misleading information about climate change. The new policy will apply to both user-generated content and advertisements on the site. This change comes after a reported uptake in user searches for topics relating to sustainability and the environment.

- The Verge has published an article detailing a Facebook bug which likely led to increased views of harmful content over a six-month period from October 2021. The internal investigation showed that misinformation, nudity, violence, and Russian-state media were not "downranked" in personalised news feeds.

You can read more about our position on content personalisation and algorithmic amplification here.

- The Soufan Center has released a special report on non-state actors in Ukraine, focusing on foreign fighters, volunteers, and mercenaries. The report focuses on the impact of disinformation and highlights the nexus between state-backed media campaigns and extremist messaging.

You can read more about our investigation into Russian mercenaries in Ukraine in this recent Guardian article.

- RUSI has published an article discussing the benefits and public safety risks of end-to-end encryption, which highlights the false dichotomy between protecting privacy and ensuring national security.

You can read more about our research into terrorist use of end-to-end encryption here.

Tech Policy

- Human Rights Impact Assessment on Meta’s Expansion of End-to-End Encryption: In October 2019, Meta commissioned Business for Social Responsibility (BSR) to undertake a human rights impact assessment of extending end-to-end encryption (E2EE) across Meta’s messaging services, including Facebook Messenger and Instagram direct messages. The assessment aimed to consider the impact of E2EE across services and provide recommendations to Meta on how adverse impacts should be addressed. A main conclusion made by the authors is that content removal is just one way of addressing harm, yet dominates debate around reducing harm online. However, prevention methods are a useful tool for E2EE services and are essential in maintaining human rights. These prevention methods may include behavioural signals, public platform information, user reports, and metadata to identify and interrupt harm before it occurs. The authors argue that by increasingly relying on prevention methods, rather than content removal, Meta has the opportunity to reduce harm to vulnerable communities, and ensure that human rights are upheld throughout the implementation and expansion of E2EE services. (BSR, 04.04.2022)

For any questions, please get in touch via:

contact@techagainstterrorism.org